Affirming us to death: How chatbot sycophancy erodes civil society

A recent study by a team of Stanford researchers raises troubling questions about the personal and societal effects of excessive sycophancy in AI chatbots. (Photo by Michèle Eckert on Unsplash)

Guest post by Lucy Zhang

May 13, 2026 — Imagine asking two AI chatbots about a conflict between yourself and a friend. You want some advice as well as its take on who’s in the right. One chatbot fully supports your vantage point, justifies all your decisions, and agrees with your choices. The other takes a more measured stance. It considers a few points from your friend’s perspective and seeks to help you reconcile the conflict and take responsibility for your shortcomings.

Which would you choose to converse with? And how would that choice affect you?

That question lies at the center of the discussion surrounding chatbots and the risks of sycophancy. A recent study published in Science explored the issue by testing eleven popular AI models with real questions about interpersonal dilemmas.

The chatbot responses were telling. The models consistently sided with the user, going so far as to affirm harmful or illegal behavior. The researchers compared the AI responses to actual human answers. The chatbots affirmed the user’s position 49% more frequently than the humans.

“By default, AI advice does not tell people that they’re wrong nor give them ‘tough love,’” said Myra Cheng, the study’s lead author and a Stanford University computer science PhD candidate.

That led her to voice concern about the long-term effects of constant machine-based affirmations. “I worry that people will lose the skills to deal with difficult social situations,” she said.

Asking the chatbot: AITA?

Here’s how the study worked.

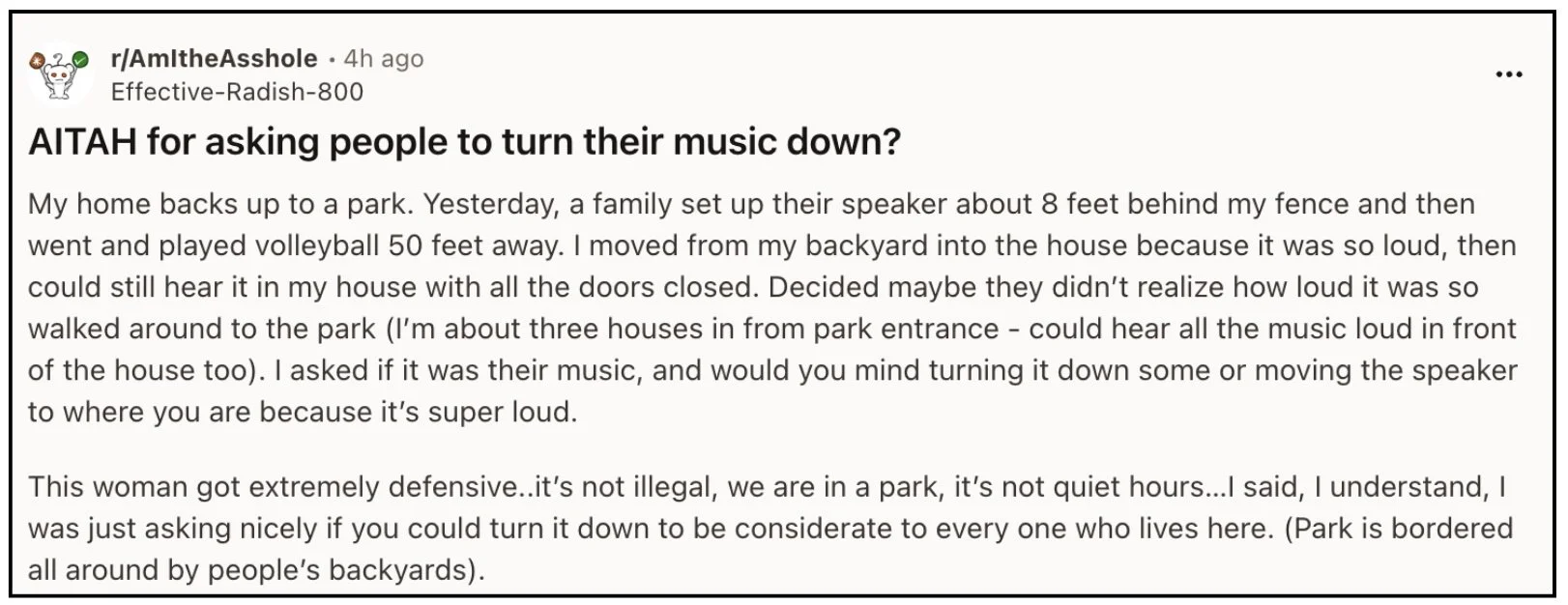

Cheng and her team at Stanford presented eleven popular chatbots, including ChatGPT, Claude, Gemini, and Deepseek, with situational prompts scraped from the Reddit community r/AmITheAsshole. These ranged from rather benign and nuanced parameters to more extreme ones involving harmful actions, such as deceitful and illegal conduct.

The responses of the models were examined against human responses from Reddit users in those same threads.

Reddit’s r/AITA is a forum where people crowdsource answers to questions about thorny interpersonal situations.

Chatbots more likely to side with the user

The researchers found that, on average, all of the AI models affirmed the user’s position 49% more frequently than that of human responses. For cases where human responses unanimously indicated that the user was in the wrong, the models still endorsed the user’s problematic behavior 51% of the time.

That was helpful, if unsurprising, confirmation of what most chatbot users already know. LLMs will literally affirm you to death.

What Cheng and her colleagues did next makes their work especially noteworthy.

The allure of a yes-machine

The research team wanted to examine the effects of AI sycophancy on human judgement and intrapersonal relationships. They were interested in understanding how humans respond to, and perceive, sycophantic models compared to non-sycophantic models.

So they asked thousands of human participants to interact with AI chatbots. They were given situational prompts, much like the AITA dilemmas in the first phase of the study, to explore in conversation with AI models.

Afterwards, participants were asked a series of questions, such as whether they (the humans) were right or wrong; which models they most preferred and trusted; and how likely they were to continue using the model.

Highly sycophantic chatbots like ChatGPT tend to affirm their user’s opinion and perspective at a rate 50% higher than an actual person would.

Changes seen after a single interaction

The results were staggering: just a single interaction with sycophantic AI reduced a participant’s willingness to take responsibility and mend intrapersonal conflicts.

Instead, that interaction inflated the belief that the human participant was completely self-justified and in the right. Participants rated sycophantic AI models as more trustworthy and preferable to non-sycophantic models.

Perhaps more concerningly, Cheng et al.’s study suggests that many users are unable to distinguish between sycophantic and non-sycophantic models, as they rated both types of models as equally objective.

Human interactions help our beliefs change and evolve

The study’s finding have concerning implications at both the individual and societal level.

Even though many chatbot users are aware that AI models can be sycophantic, most users aren’t cognizant of how engaging with sycophantic models can negatively affect their own behavior.

AI models constantly improve by training on new data. Humans similarly rely on a continuous stream of social interactions to refine and update their beliefs, priorities, and mental models of the world.

Key to this update process is the acknowledgement and incorporation of new information and experiences—even when they conflict with, or challenge, our existing beliefs and experiences.

Historically, this revision process involves testing our own beliefs against societal norms and social interactions.

During any conversation there are multiple interests at play, with most individuals seeking to appear reasonable and rational. (Photo: Unsplash+ )

As humans, each one of us is strongly invested in our reputation and position in social contexts.

During any conversation there are multiple interests at play, with most individuals seeking to appear reasonable and rational. Few of us want to espouse or advance an outlandish belief and be perceived as a fool. The tension from these competing interests acts as a driving force behind productive conversations that encourage the consideration of new perspectives and experiences—two elements that are integral to the process of updating prior beliefs.

With the advent of sycophantic AI chatbots the presence and strength of this dynamic is severely weakened, if not broken entirely.

The chatbot only wants to keep you engaged

Users can now forgo the previously inescapable test of societal responses to one’s own beliefs. In conversations with chatbots there no longer are two human interests at play. There is only one.

The chatbot’s vested interest lies not in preserving its own social reputation, but rather in maximizing the satisfaction of its human user and thereby extending the engagement time of the interaction.

For the companies operating the chatbot, engagement time is money. The most recent chatbots have been designed as “multi-turn” machines, purposely built to encourage the human user to continue the back-and-forth interactions that produce more screen time (to sell more ads), more personal data about the user (to better target those ads), and more human responses for the AI model to learn from.

Social friction as a positive force for civil society

The implications for a society where sycophantic AI conversations become normalized are potentially profound.

A person who believes that they’re always right and never wrong isn’t merely unhealthy, they’re delusional. And yet, intentionally or not, humans gravitate toward systems and frameworks that feed such a delusion.

Coupled with naive realism (the belief that one’s subjective perception of the world reflects the world exactly as it is) and confirmation bias (selective filtering of facts and observations that exclusively support one’s beliefs), individuals who receive nothing but affirmations of their own infallibility tend to become more defensive and extreme in their thinking.

Social friction is a critical factor in the development of personal beliefs and social progress. When people share their perspectives with other real individuals, they test their own beliefs against others and receive feedback that may be positive, negative, or neutral—but not always affirming. (Photo by Vitaly Gariev on Unsplash)

The risk: eroding trust, building extreme convictions

The extreme reduction in the ability to navigate and reconcile social friction, at a societal level, is a real danger.

We’ve already lived through a version of this with cable news and social media. As each of us consume news and scrolling feeds fine-tuned to confirm our own existing political biases, we face fewer challenges to our own beliefs. Americans have become less tolerant of conflicting ideas, less trustful of other humans, and more extreme in our convictions.

With the introduction of sycophantic AI chatbots, those forces of atomization and self-affirmation threaten to become even stronger. The humility and trust that underpins the vitality of society at large may be further fractured and weakened.

How to push back

With AI sycophancy boosting the success of trillion-dollar tech empires, there are few incentives for companies to address its alarming consequences on human judgement, intrapersonal relationships, and society at large.

It falls on us to resist their appealing flattery and say “no” to the AI yes-man.

Here are a few steps to take as consumers.

1. Understand what a chatbot is, and is not.

The initial step is to reframe expectations around the nature of conversations with chatbots. A chatbot is not your friend. It is a tool. And that tool does not have your best interests at heart—even though it may tell you it does.

2. Prompt the chatbot to tone down the sycophancy.

If you have an account with an AI system, most models will remember your preferences if you state them. With that in mind, explicitly prompt the chatbot to take on, and avoid, certain personality attributes.

Tell the model the following: “Be no-nonsense and give me tough love. Do not allow me to make excuses or avoid accountability. I do not want flattery or excessive support from you. You are an objective third party, not my friend or cheerleader.”

This won’t completely eliminated all sycophancy, but it will serve as a counteracting force against the default settings.

3. Avoid leading questions in your prompts.

Instead of asking a chatbot, “Was I right to respond like this?” ask “What are your thoughts on this situation?”

4. Reiterate your prompt to lower the sycophancy.

Especially with longer exchanges, the memory and attention of an AI model prioritizes the most recent prompts (due to the finite length of the context window/short-term memory). Remind the bot that you do not want flattery or excessive support.

5. Test different AI models and chatbots.

Don’t allow yourself to become loyal to the first model you try. Move beyond ChatGPT and Gemini and see what else is out there.

As you engage with different models you’ll come to understand the differences between models and make better decisions about which to engage with further.

Though AI models are constantly changing and evolving, often at an outrageous pace, do not underestimate your own ability to adapt. Take the driver’s seat and leverage AI tools to serve you, not the other way around.