Oregon Gov. Kotek signs chatbot bill into law, in significant win for kids and AI safety

Oregon legislators have given final passage to a bill that requires chatbot operators to implement strong measures to protect kids who interact with the products.

April 1, 2026 update — Oregon Gov. Tina Kotek yesterday signed SB 1546 into law. The provisions and requirements specified in the measure will become effective on Jan. 1, 2027.

March 5, 2026 — Oregon legislators earlier this morning gave final approval to SB 1546, a bill that requires AI operators to implement strong safety protections for kids using chatbots.

The bill’s passage, a top priority for the Transparency Coalition this year, is a significant win for kids, parents, and all consumers who wish to use AI chatbots safely. The bill proved to be extremely popular with lawmakers. It passed the Senate on a 26-1 vote and was approved by the House, 52-0.

The measure now goes to the desk of Gov. Tina Kotek, who has five business days to sign, veto, or allow the bill to pass into law without her signature.

Why this bill matters

Oregon’s SB 1546, sponsored by Sen. Lisa Reynolds, stands as the first major AI chatbot measure to pass in 2026. It follows the milestone passage of the nation’s first chatbot safety measure, California’s SB 243, which was signed into law by Gov. Gavin Newsom last October.

The original full text of SB 1546 is here. The bill’s progress, and revised versions, may be found here.

Top priority for Transparency coalition

Oregon’s chatbot safety bill has been a top priority for the Transparency Coalition in 2026. TCAI’s experts have been in Salem working with sponsor Sen. Lisa Reynolds and offering expert testimony to state legislators considering the bill.

Sen. Reynold gave a synopsis of the bill with Transparency Coalition COO Jai Jaisimha last month:

bill summary

SB 1546 requires operators of artificial intelligence (AI) chatbots to issue certain notifications and implement precautions for all users, and adds additional protocols for a user who the operator has reason to believe may be a minor.

The bills require operators of AI chatbots to:

tell users they are talking to AI, not a human,

implement protocols for preventing outputs that cause suicidal feelings or thoughts,

implement special protocols if the AI system operator has reason to believe the user is a minor, and

report each year to the Oregon Health Authority concerning incidents in which users were referred to resources to prevent suicidal ideation, suicide, or self-harm.

The bill also allows a user who has suffered ascertainable harm to bring an action for damages and injunctive relief.

what’s in it specifically for families and kids

SB 1546 requires operators of artificial intelligence (AI) chatbots to issue certain notifications and implement precautions for all users, and adds additional protocols for a user who the operator has reason to believe may be a minor.

Why the “reason to believe” language is important: Tech companies have designed sophisticated systems to identify kids online. Bad operators sometimes hide behind a legal reading of not having absolute proof that a user is underage. This bill language holds tech companies accountable and closes that loophole.

Initial warning for kids: The bill requires chatbot operators to state, up front, that the chatbot may not be suitable for kids.

Disclosure that the chatbot is AI, not human: When interacting with a minor, the bill requires a chatbot to remind the user that the user is interacting with an AI system, not a real human. This reminder must be repeated at least once per hour.

No deception allowed: The bill prohibits a chatbot interacting with a minor from misrepresenting the chatbot’s identity or falsely claim to be anything other than an AI system.

Regular reminder to take a break: The bill requires chatbots to provide kids with a clear and conspicuous reminder, repeated at least once per hour, that the user should take a break from interacting with the chatbot.

No sexual content allowed: The bill requires chatbot operators to ensure that when interacting with minors, the chatbot does not produce sexually explicit content or state that the minor should engage in sexually explicit conduct.

No addictive algorithms: The bill prohibits a chatbot, when interacting with a minor, to deliver a system of rewards or affirmations designed to maximize a minor’s engagement time with the chatbot.

No emotional manipulation allowed: The bill prohibits a chatbot interacting with a minor from generating messages of emotional distress, loneliness, or abandonment in response to a user’s desire to end a conversation or delete an account.

Required protocols to prevent suicidal outputs: The bill requires chatbot operators to prevent responses that could cause suicidal feelings or thoughts. This is a requirement for interactions with users of all ages.

Required protocols for suicidal/self-harm interest: The bill requires all chatbot operators to identify when a user (of any age) indicates suicidal ideation or interest in self-harm, and to refer the user to appropriate mental health resources.

More chatbot safety measures coming in other states

TCAI continues to monitor and document the advance of similar chatbot safety bills in a number of other states.

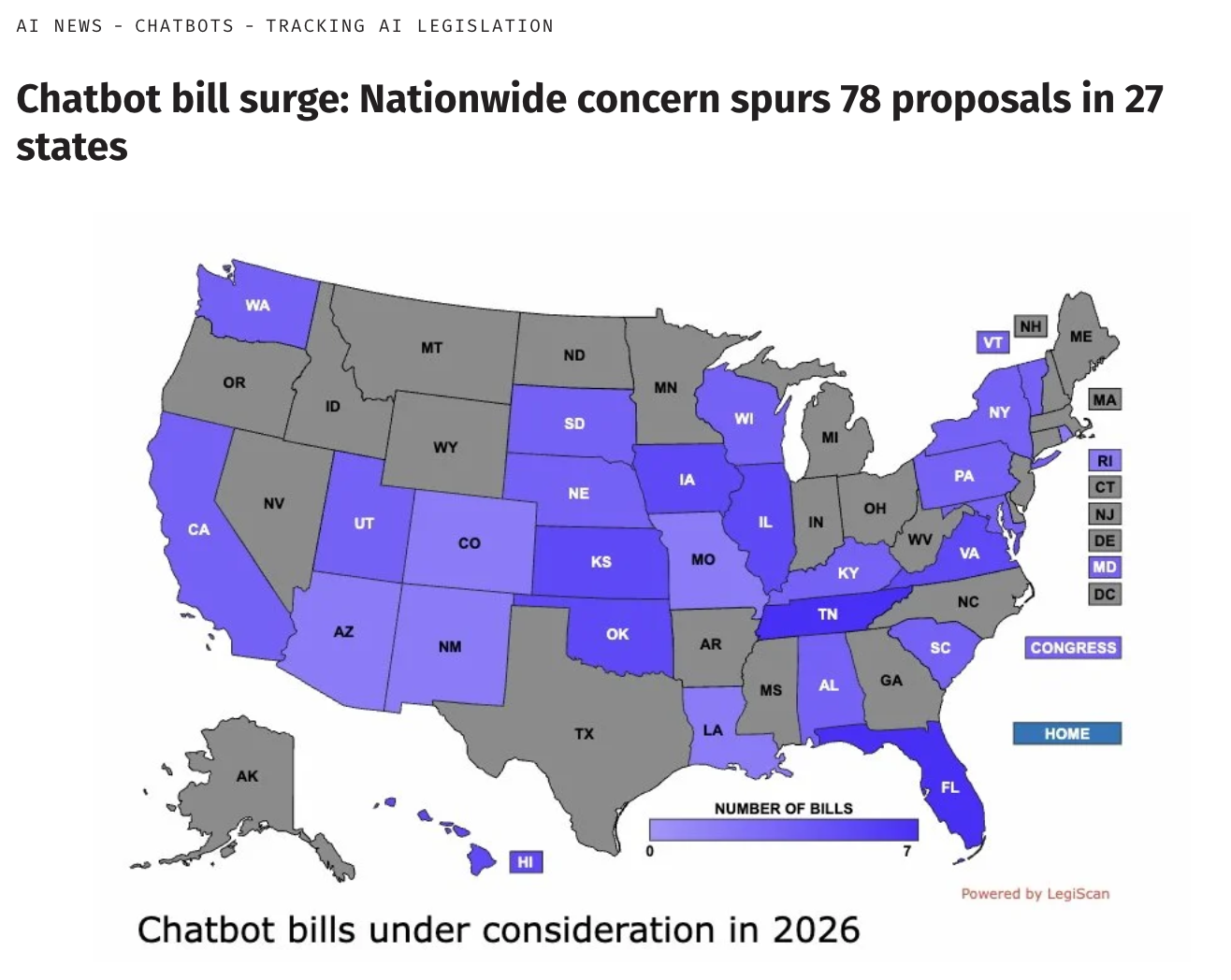

As of late February, at least 78 chatbot-related bills had been introduced across 27 states. Chatbot safety is proving to be a rare bipartisan issue, with both Republicans and Democrats sponsoring safety bills.

Oregon’s chatbot bill is the first to pass in 2026, and similar safety measures are nearing final approval in Washington and Utah.

For all the latest on AI-related bill movement, sign up for our AI Legislative Update newsletter, published every Friday morning during the legislative season.