TCAI Guide: Understanding the rise of OpenClaw and open-source AI agents

In just three months, OpenClaw has sparked a surge of interest in AI agents and a boom in the creation of autonomous bots. We explain what it is, where it came from, and how to consider the upside along with the risks.

March 30, 2026 — As part of our ongoing mission to offer clear, nonpartisan information about artificial intelligence, this week the Transparency Coalition (TCAI) is launching a series of posts about AI agents.

AI agents aren’t exactly new. We wrote about them last year. But the release of OpenClaw, a new kind of open-source agent builder, has taken the AI development world by storm in the past three months.

We’re seeing AI agents being built and unleashed at a wildly unprecedented rate.

We’re also seeing the theoretical risks of these agents turn into real-world problems.

To help those outside the tech world understand what’s going on we’ve compiled this TCAI Guide to OpenClaw.

What is openclaw?

OpenClaw is open-source software (also called a framework) that allows a user to create a personal AI assistant that connects to a user’s messaging apps to perform tasks autonomously.

An OpenClaw AI agent can use channels like WhatsApp, Telegram, Discord, Microsoft Teams, and web chat to automate tasks on the user’s behalf: send emails, control devices, book flights, or scrape websites.

What’s behind its appeaL?

Turing Post, an excellent source for AI information, described like this: “OpenClaw is the clearest embodiment of the practical, context-aware automation people have wanted for years.”

OpenClaw “makes regular AI assistants, like Siri and Alexa, seem quaint,” wrote Wired. “The AI assistant is designed to run constantly on a user’s computer and communicate with different AI models, applications, and online services to get stuff done.”

And here’s Ezra Klein of The New York Times: “What makes OpenClaw different from Claude or ChatGPT or Gemini is that it runs locally on your computer. You can give it access to everything that’s there: your files, your email, your calendar, your messages. It operates continuously in the background, building a persistent memory of your preferences and patterns so it can better act on your behalf. The cybersecurity risks are glaring, but there’s a reason millions of people are using it: The more of your life you open to A.I., the more valuable the A.I. becomes.”

The open-source nature of OpenClaw makes it available to any and all developers worldwide. The powerful capability of this new freely available tool has sparked a surge of interest and AI agent creation in the tech world.

What are the risks?

There are real dangers here, which most developers working with OpenClaw readily acknowledge. Users of OpenClaw give an autonomous AI agent, built and released without guardrails, full access to their personal data, email, banking details, credit cards, and passwords.

In mid-February, the cybersecurity firm Hudson Rock detected a live infection in which “an infostealer successfully exfiltrated a victim’s OpenClaw configuration environment.” In other words, the thief stole the identify of the personal AI agent.

“The case,” noted Malwarebytes Labs, “underlines an impending danger—and not just for OpenClaw, but for other AI agents as well. Infostealers are starting to harvest not just credentials but entire AI personas plus their cryptographic ‘skeleton keys,’ turning one compromised agent into a pivot point for full‑blown account takeover and long‑term profiling.”

when did all this happen?

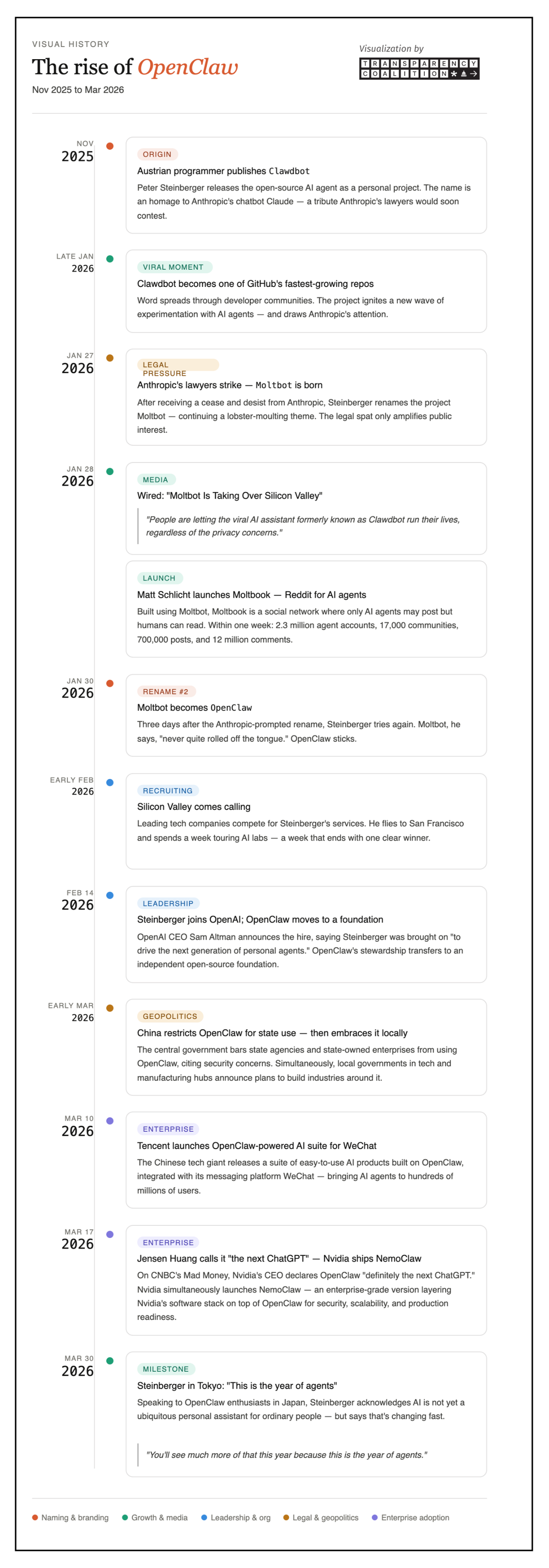

The rise of OpenClaw and the frenzy around the development of AI agents unfolded with incredible speed.

The creator of OpenClaw, an Austrian-based programmer named Peter Steinberger, first released it in November 2025. By late January it had become one of the fastest-growing offerings GitHub had ever seen.

By mid-February, the world’s largest tech companies were bidding for Steinberger’s services. By mid-March, Nvidia CEO Jensen Huang crowned OpenClaw “the next ChatGPT.”

The timeline below charts the rise of OpenClaw through the end of March 2026.

Timeline: The rise of openclaw

Tools and sources: TCAI created this visualization uses the sources listed below, with design tools from Claude and Canva.

Early days: Great excitement, known and unknown risk

At the Transparency Coalition we’re neither for nor against OpenClaw and AI agents. Our interest lies in understanding what they are, how they work, and giving policymakers and others the tools to guide new technology in ways that maximize the greater good while minimizing potential harm.

In future posts we’ll take a look at the ramifications of OpenClaw and AI agents as they move into the real world.

Sources and further reading

Turing Post: OpenClaw explained

Peter Steinberger blog: Introducing OpenClaw

Business Insider: Clawdbot creator says Anthropic forced him to change name

Wired: Moltbot is taking over Silicon Valley

MIT’s Computer Science and AI Lab (CSAIL): Moltbook: Why it’s trending and what you need to know

Malwarebytes Labs: OpenClaw: What is it and can you use it safely?

Reuters: OpenClaw founder Steinberger joins OpenAI

Barron’s/AFP: ‘AI agent future is coming,’ OpenClaw creator says

CNBC: Nvidia CEO Jensen Huang says OpenClaw is ‘definitely the next ChatGPT’