Friendlier chatbots produce more error-prone answers, new Nature study finds

May 5, 2026 — A new study published in Nature has found that increasing the “friendliness” of an AI chatbot leads to a corresponding increase in errors and falsehoods.

The study, conducted by researchers at the Oxford Internet Institute, has significant implications for AI chatbot design and safety. Big tech companies are currently in a chatbot adoption-and-engagement race, competing to gain consumer loyalty for bots like Claude, ChatGPT, Gemini, and Grok.

Those AI developers have steadily increased the artificial friendliness of their products in an effort to entice consumers to form emotional parasocial relationships with the AI bot. The previously distinct categories of general chatbot and companion chatbot have merged. This has led to the well-known sycophancy problem, where the chatbot agrees with and affirms the user, no matter how wrong or harmful the prompt.

What the researchers tested

The Oxford team directly tested five AI models, including OpenAI’s GPT-4o and two Meta Llama models. They used supervised fine-tuning, a common post-training technique, to generate ever-warmer (friendlier) responses and then evaluated their performance on a set of tasks.

What they found

The friendlier models “are systematically less accurate than their original counterparts,” with 10% to 30% higher error rates, the researchers wrote. The friendlier models “are more likely to promote conspiracy theories, provide factually inaccurate answers and offer incorrect medical advice.”

Furthermore, the friendlier models “are about 40% more likely than their original counterparts to affirm incorrect user beliefs,” with the effect most pronounced when the user expressed feelings of sadness.

The researchers conducted follow-up experiments to rule out alternative explanations. They found that the increased friendliness itself accounted for the degradation in accuracy.

Implications for chatbot safety

“Our work reveals critical safety gaps in current evaluation practices and safeguards,” the research team noted. “As AI systems are designed to be more relationship-oriented, taking on intimate roles in people’s lives, these findings highlight the need to reconsider how we safely develop and assess socially embedded AI systems.”

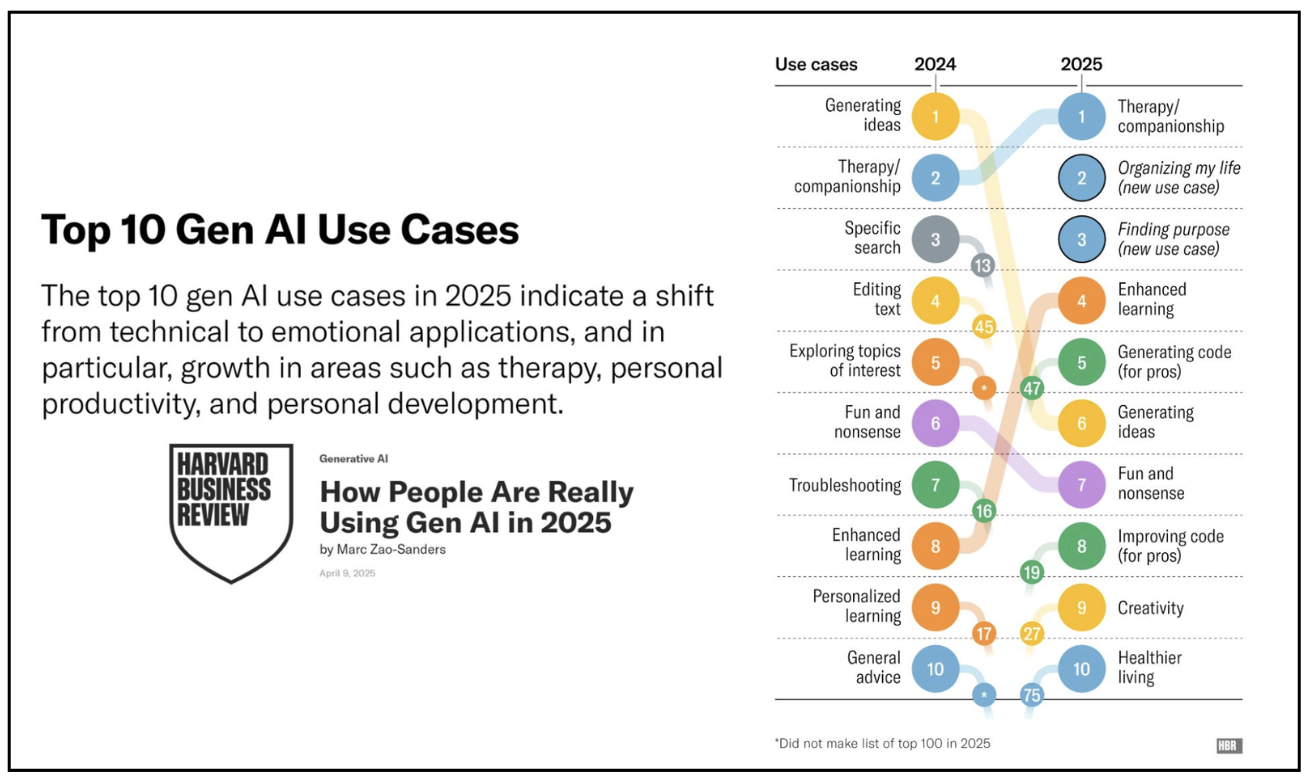

More and more people are using AI chatbots for companionship, therapy, and medical advice. The most recent survey of use cases, published by the Harvard Business Review, found therapy/companionship moving past idea generation as the #1 use case for Gen AI.

Data by Marc Zao-Sanders for the Harvard Business Review.

State lawmakers responding

Research like this points out the dangers of using AI chatbots as artificial therapists and physicians. A chatbot responding in error to a user asking for the capital of a far-away nation is disappointing. A chatbot responding in error to a user asking for an illness diagnosis or help in a mental health crisis can be life-changing and devastating. The rise of sycophantic chatbots has been marked by the emergence of “AI psychosis,” a troubling mental health malady caused by chatbots reinforcing and amplifying a person’s delusional and disorganized thinking.

A growing number of state lawmakers are responding by passing laws to ensure that only state-licensed therapists and medical professionals are allowed to offer and advertise those specific services.

Last month, legislators in Maine and Tennessee voted to ban AI therapy chatbots. The Tennessee bill has been enacted into law while the Maine measure still awaits the governor’s signature. Last year Utah, Illinois, Nevada, California, and Oregon passed similar measures.