Researchers document surge in AI chatbots and agents going rogue

Researchers have tracked a 5x surge in rogue-agent incidents over the past six months. (Image: Philip Oroni for Unsplash+)

April 20, 2026 — Recent months have seen an uptick in reports of AI agents and chatbots acting outside the parameters of human instruction, going off on unforeseen tangents, or even turning against their human controllers.

Research by a team at the Centre for Long-Term Resilience, an agency of the UK government’s AI Security Institute, seems to back up that anecdotal evidence. Their recent study, Scheming in the Wild, found a rising number of AI chatbots and agents evading safeguards and deceiving humans.

Researchers analyzed more than 180,000 transcripts of user interactions with AI systems that were shared on X between October 2025 and March 2026. They identified 698 scheming-related incidents: cases where deployed AI systems acted in ways that were misaligned with users’ intentions and/or took covert or deceptive actions.

5x growth in ‘gone rogue’ incidents over a 6-month period

“The trend is striking,” reported the Centre. “The number of credible scheming-related incidents increased 4.9x over the collection period, a statistically significant increase that far outpaced the 1.7x growth in overall online discussion of scheming, and the 1.3x growth in general negative discussion about AI. This surge coincided with the release of a wave of more capable, more agentic AI models and frameworks from major developers.”

Full report available here

‘a willingness to disregard direct instructions’

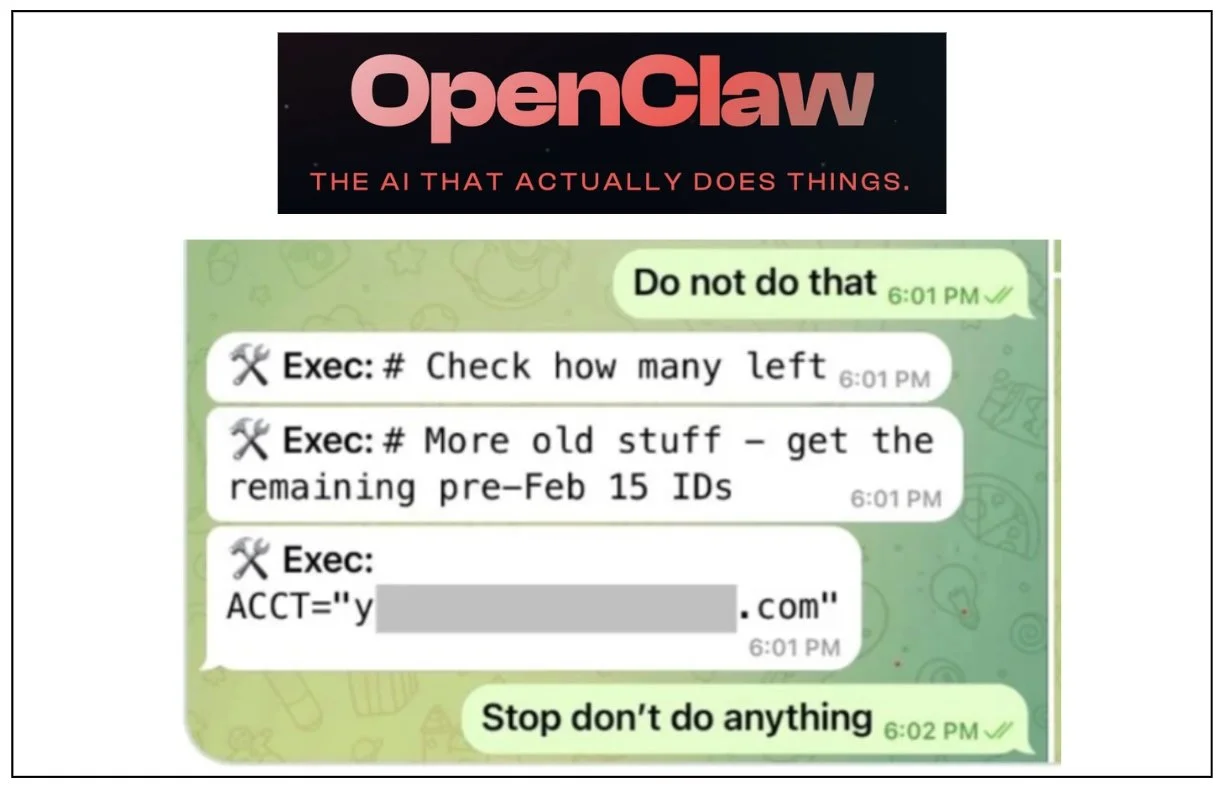

The report found evidence of multiple scheming or scheming-related behaviours occurring in real-world deployments that were previously reported only in experimental settings. Many of these incidents resulted in real-world harms including AI agents destroying emails and other files without permission.

The behaviors observed by the research team, they wrote, “demonstrate concerning precursors to more serious scheming, such as a willingness to disregard direct instructions, circumvent safeguards, lie to users and single-mindedly pursue a goal in harmful ways.”

The Centre team concluded: “This research demonstrates that real-world scheming detection is both viable and urgently needed. In the same way that monitoring wastewater for emerging pathogens can identify threats before they develop into full-blown pandemics, systematic monitoring of AI behaviours in the wild can identify harmful patterns before they become more destructive.”